This article was written by Nathan Field and Jake Baker, two great people I had the privilege of mentoring in 2017.

Although search algorithms are changing frequently, the underlying technology behind them does not.

By taking the time to learn the technical aspects of search, you will develop digital marketing skills that survive any passing change or algorithm update. This SEO tutorial will teach you the fundamentals of search engine optimisation for the modern web.

Let’s begin…

Definition of Search Engine Optimisation (SEO)

Search Engine Optimisation (SEO) is how marketers go about making sure that their web pages are both indexed and ranked well by search engines. Ultimately, SEO is the optimisation of your content against a users’ search phrase.

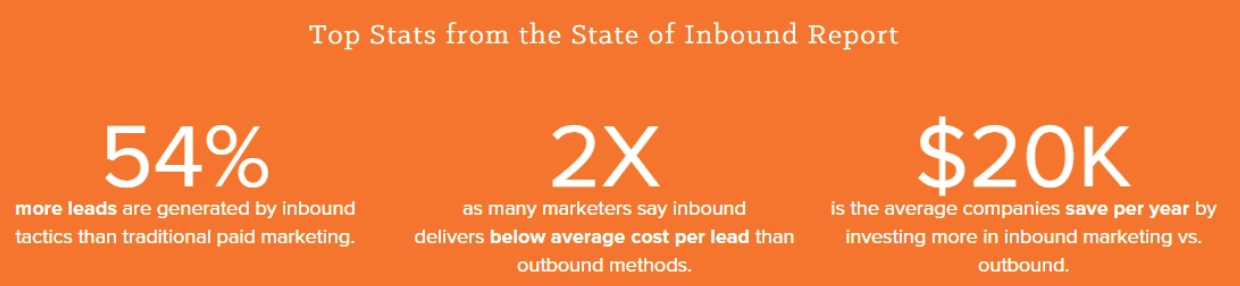

Above all else, SEO is a marketing channel that loosely falls under the category of ‘inbound marketing’. Inbound marketing is a strategy designed to target customers who are looking for the products or services you sell, and are finding their way to you.

Search engines (as a channel) provide a way for these customers to find, visit and ultimately trust you. But as we’ll learn in this article, search engines are also a ‘customer’ in their own right, and have ‘needs’ that must be met also.

Why You Need to Be on Page One

Typically the most popular websites (or the best optimised) will be displayed on page one of the search engine results pages (SERPs). Your goal as an SEO marketer is to get your website on page one. Here’s why…

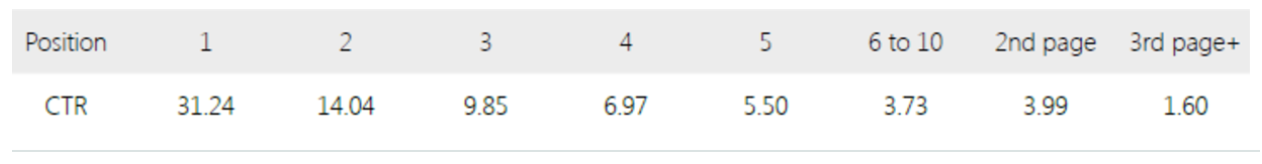

Research shows that the top 10 ranked websites (on desktop search) get around 70% of all clicks.

As you can see, the number one spot is getting a third of the clicks. This is even further reinforced when you consider there are literally trillions of searches per year. That’s right. Over 1,000,000,000,000 searches. Big number, right? Time to capitalise.

The good news is that it’s not as hard to do as you think. The real skill is perseverance. Most people give up after a few days, weeks or years. Search engine optimisation is typically a long term effort. You need to work hard to get up the rankings (and stay there).

The name of the game is incremental progress, as with most good things in life.

Let’s start at the beginning…

A Quick History of SEO

Today when we think of a search engine, we have a fairly solid notion of how it works. A document index of the web, accessible and queryable via a search bar. But it wasn’t always like this, the first search engines behaved more like directories.

A directory? Yep, a directory. Remember the days of the Yellow Pages? Say you were looking for a plumber, you would open the directory and flip across to the services section and audit the list for a ‘plumber’. The first search engines worked like this too. But the web was a lot smaller then (maybe less than 1,000 pages per country).

Dmoz.org (an early directory) is one example of such a directory (and is still influencing search results today). Using the directory would involve visiting the home page (you would have memorised the URL), selecting a topic, a sub-topic, and then choosing from a limited set of results.

“Only a few options!”, you exclaim, “how did DMOZ know what was important to display?”. Great question. Frankly, a human told them. Plain and simple, a person like you and me maintained the directory.

This short era then gave way to a new kind of ranking system, primarily based on keyword density or frequency. Directory companies realised that they could count the number of relevant words, or ‘keywords’, on a given page and use this to determine the subject matter. So if I went to a directory like Yahoo, the top results for my search ‘plumber in Surrey Hills’ would likely be the pages that listed their Surrey Hills address a few times on each page, and mentioned the words ‘plumber’ and ‘plumbing’ most frequently.

All that was good and well, until SEOs realised it could be gamed. Webmasters (people who own/run websites) began ‘keyword stuffing’ (filling the page with target keywords). White font on white backgrounds was one example (the search engine would see it, but not the user). Thought you’d try this.Meanwhile the sheer volume of content was growing faster than the directories could keep up. There had to be a better way…

In January 1996 Larry Page and Sergey Brin began working on this problem as part of their PhD students at Stanford University, and ultimately developed a concept called Pagerank. Since then, Google has become the gold standard in sorting the world’s information. Let’s consider how they did it.

Links Are People Too

In order to understand why Pagerank is so vital, we need to understand how search engines actually discover content on the web.

It’s easy to perceive Google as an all-knowing behemoth of webpage knowledge. But it’s not. Your website isn’t hosted on ‘Google’ – your website is a group of documents (.html, .pdf, .jpg, .gif) floating around the Internet, hosted somewhere in the world. To be able to search the web, Google must first create an index of the web. An index is basically a list of things. Google creates this index by ‘crawling’ the web.

To do this, Google employs what are commonly referred to as ‘web crawlers’, or ‘spiders’. Conceptually, a crawler is just like you and me – making it’s way through the web by following links. It is a software program, bot or script hosted by Google that visits a site and adds all the links on that site to a list for it to index later. There are more bots on the internet than people.

As you can see the crawler that indexes the web relies on links to be able to move between websites. Links therefore underpin Google’s (and peoples) ability to navigate the Internet. Imagine a webpage with no links pointing to it or from it. No one would find it (including the crawlers). Links underpinned Pagerank.

Back in the early days (early 2000’s) Google wasn’t so great at crawling. Better than the rest, but nothing compared to today. It would take them up to a month to crawl the whole web, then a week to index the web then a week to update their databases on what’s out there. Not the best user experience if the results Google is serving up take that long to be refreshed!

At this point, Google didn’t prioritise crawling the top sites which are hit often, but in order to serve up up-to-date search results, these sites needed to be crawled more often to accommodate for their higher volume of users and therefore queries. Google suddenly realised they should crawl important sites more frequently, and to help determine which sites to prioritise on this way, decided to segment the web based on a page’s ‘Pagerank’.

But how did they know which sites got a higher rank? The more reputable (popular referrers) and relevant (text keywords) links you have pointing to your page, the more that Google’s Pagerank system will consider your page as valuable.

Pagerank → influenced by the number and quality (popularity, relevance) of links to a page. Helps determine how important the website is. It’s how Google and other search tools can tell that cnn.com matters more than the local pub’s website (to some people at least 🍻).

Why Links Will Always Matter

Because crawlers travel through links, we immediately start to logically see how it is that Google values inbound links so much. Basically, if the world thinks you have great and relevant content, they will link to you, Google will elevate you, and it becomes a self perpetuating cycle. Links links links.

Now we know how Google crawls the web. With spiders, through links, determining Pagerank. This is very important and fundamental to understanding why the concept of ‘link building’ is so crucial. Re-read the last few paragraphs again if you’re still not 100% clear here.

Why Link Building Matters

As you can see, link building matters. Let’s explore why with a quick example.

Imagine your web page is a student in middle school: Tom. If lots of people referred to Tom as the ‘best football player’, this would create credibility for Tom when others asked ‘who’s the best football player’? Once enough people thought Tom was ‘the best football player’, we make him football captain. What does this mean in the context of a search engine? Tom’s now top of the Google pile, because the search tool sees the credibility he has.

So, how does Google know what people are saying about your website? The answer is simple. The anchor text of an inbound link is essentially a vote for your site for that phrase. For example a link to a website about Brent Harvey with the phrase best Australian Footballer will pass credibility to that content for that phrase.

The most difficult thing in this latest development is that links are on other people’s websites. Linking is a key factor in both popularity (how popular is that website) and relevance (what they say about your link). What a web of intricacy.

With this in mind, do you think the phrase ‘all PR is good PR’ holds true, or not?

This sounds all fairly intrusive, doesn’t it? “It’s my website!”, you say, “Google doesn’t know how to best serve my content on mitochondria!” Correct – which is why it is mostly up to you (the SEO optimiser).

Google does however do an amazing job of interpreting your site, we just need to ensure our website is laid out in such a way to give Google’s crawlers an easy path around the site.

Basic SEO Optimisation Strategies

Here are a few examples of this type of basic optimisation:

- Give crawlers a few tips – use a robots.txt file on your web root folder to tell crawlers what to visit and how often. This is helpful if you have legacy content on your website, or if you have a large volume of static pages that don’t change often.

- Don’t put valuable content deep in your site. If you want someone to read about your new lotion product, don’t put it at www.mysite.com/products/fall-products/1176/product-3. Serve it up at www.mysite.com/anti-fungal-lotion.

- Keep everything in the family – don’t split your valuable content between domains or subdomains – blog.mysite.com is a different domain to www.mysite.com.

-

- Seriously—don’t split your content between subdomains. Such a bad idea.

- blog.website.com and website.com are two different sites according to crawlers

- Try to control relevant text in your inbound links, otherwise known as the “anchor text”. For example linking to your article on the newest iPhone should look like this “iPhone 8 review” rather than “the newest iPhone is waaaay too expensive in my opinion”.

- Crawlers interpret text – for example they have only recently learned how to read images and still struggle to read embedded PDFs. Moreover, the sheer resources required to do so may prevent the content from being indexed regardless. If you can’t make your content text, use alt tags on all your non-text content to help crawlers attribute keywords to it.

- Website metadata – This is hidden within the HTML of the website that tells search engines what your website’s title, description and keywords are. Why HTML? Google understands code, so we have to speak their language. Much more on this later.

Present Day Search

Of course, relevancy and popularity are broad ways of describing the many hundreds of factors that search engines consider when ranking your page. It’s fair to assume that Over time, Google has also removed the odd ranking factors/signals here and there. As such, search has naturally become quite complex.

For example, many of the stronger ranking signals Google now considers relate to the user’s experience (UX). How long is someone spending on a site? Do interstitial popups appear? Are they clicking through to new pages? As such, it’s likely that Google and others in the future will continue to look at these metrics alongside links. If they can determine new ways to measure a user’s interest, you can bet they’re going to show it!

This means that the more you pay attention to SEO over time, the better your chances are of getting to the top of page one.

Further Validation

To validate our newfound conviction on the importance of links, lets review some additional evidence.

Understanding Google’s Dependency on Links to Crawl the Web

We know, and have explained from a high level how Google’s spiders navigate their way around the absolutely enormous amounts of information around the web. Via links. If you’re still a bit hazy on the basics of this we highly recommend you watch the video from Google Webmasters we mentioned previously.

Since Google relies on links to crawl the web and index those pages, it goes without saying that links are part of the equation when ranking and returning the most relevant ones first.

Detailed Secondary Research from Credible Sources

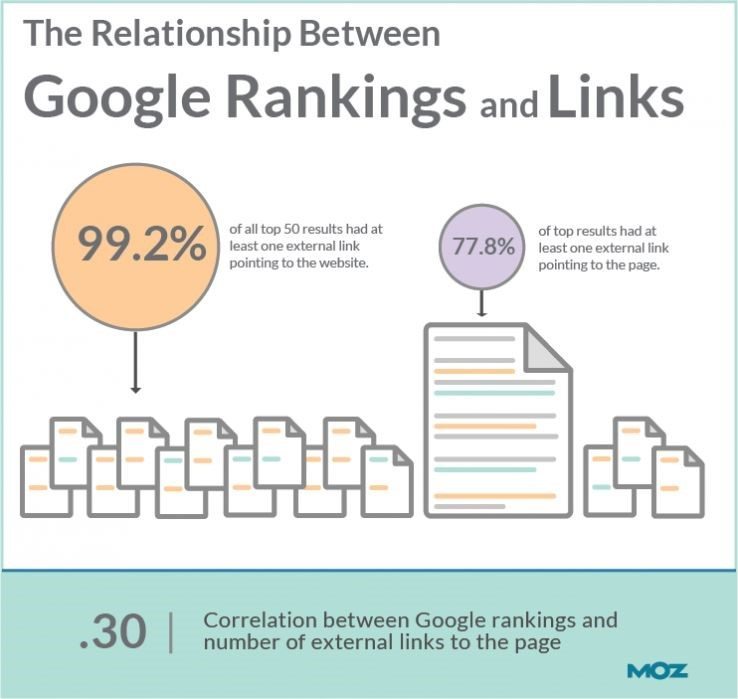

One of the most detailed search engine ranking factor reports from Moz went to the effort to analyse 17,600 keyword search results from Google and to interview 150 of the world’s most foremost search marketers.

Their analysis of 17,600 keywords was to show correlations between pages with certain features and how highly they ranked.

What was the feedback from the top 150 search marketers? Surprise surprise, the top 2 influential factors were:

Domain level, link authority features: This is based on link metrics such as quality of links, trust and domain level pagerank.

Page-level link metrics: This is based on page level trust metrics such as quantity of linking root domains, links, anchor text distribution and quality of link sources.

Links on a page and domain level matter. The statistics say so, and so do the experts. The same theme is appearing. The quality and quantity of the links to your page are going to play a massive role in your search engine visibility, and therefore your business success.

Backlinko analysed 1 million search results, guess what they found? “The number of domains linking to a page correlated with rankings more than any other factor”.

Your page is one amongst millions. Being found is essentially expecting a user to find a needle in a haystack. If we take this advice in the context of everything else we’re going to cover, we can supersize that needle.

Primary Evidence

We can also use tools like Ahrefs to show us the linking portfolio of a website and use this to get an idea of how the strength of their links are impacting ranking.

This might be a more advanced step as there are so many factors to consider. However, if you get used to the interface and the information it shows, this and other similar tools will typically show a correlation between backlink portfolio and ranking. Or, in this case, Ahrefs domain rating and URL rating.

Tesla.com, which ranks first for over 11,000 search terms, shows a URL rating of 87 (very, very high) and a domain rating of 69 (high).

UR rating (URL Rating) – Is the strength of a URL’s backlink profile and likelihood it will rank well on Google.< strong>DR (domain rating) – Is the strength of the whole website’s backlink profile.

We can already start making advanced inferences. Remember how we said page level link-based features were the most important from the correlational study? This means if we have a really strong backlink profile to one particular URL we can potentially outrank a site with a very high overall domain score. There is much more to come from us, but if you’re interested now check out Ahrefs blog.

Note: For tesla.com, there is a higher URL score than domain. That’s easy to understand because it’s the homepage. It’s going to be common practice for people who are talking about this company to link to the homepage.

To stress even further how important backlinks are in shooting up the rankings, Ahrefs provides an approximate estimate of the number of links required to make the first page for a certain term. We typed in ‘car’ and this is what we got back.

The point of this section isn’t meant to be a guide on SEO tools, just to emphasise how important links are. That’s the main takeaway here. If you want to learn more about SEO tools we recommend this article on SEO industry experts favourite tools. We’ll be covering this in depth soon so keep an eye out.

Importantly, all of our own inferences are confirmed by the above studies from Moz and Backlinko.

The top three

Ultimately, we are trying to provide the most relevant and useful information to our users, given their original search. Neil Patel has a fantastic article on the 10 most important seo tips you need to know for blogs, which can be boiled into three key areas for across-the-board use.

Readability

It all starts with the question ‘is this a good experience for my users?’.

Chances are the best ‘yes’ for your users is also a ‘yes’ for search engines. You want as little distractions to the user getting what they were looking for as possible, and Google will respond in kind.

For example, consider page speed. A site that takes 5 seconds to load will have a lower user satisfaction and likely a higher bounce rate than a site with the same content that loaded after 1 second. Heck, Strange Loop even reported a 7% drop in conversions with every second of load time (the same article has some great tips for reducing page speed).

The same can be said for your content – the days of keyword stuffing are gone, and you need to write for your users first, search engines second, if you want to engage them. Users aren’t stupid, and Google doesn’t treat them as stupid either.

This point even goes as far as the seemingly innocuous things like your URLs. If your URL is readable and meaningful, users will follow the trail, and Google will follow. Resist the habit to incessantly catalog your website with structures that render your articles as contoso.com/articles/categories/15/article-135. Make that article about your great new feature at the top of the tree – contoso.com/my-great-feature-for-industry, and don’t use underscores in your URL – search engines don’t recognise them (they join words, instead of separating them).

Meta descriptions need to be unique and relevant to each page, as duplicate content is both a wasted opportunity and could also get you penalised by Google. This is because google reads the HTML of your site, not the actual page text we see. To this end though, ensuring that all non-text content like images are appropriately named also helps search engines and users alike find your content. Fight that urge to name your next file mktg_hero_persona1-3.jpg. Yuck.

Lastly, one of the more recent (at time of writing, mid 2017) changes to Google’s algorithm was to explicitly prioritise websites with a good mobile experience. Not only will this get you ranked higher, your chances of users engaging and converting with your content will increase. Ensure that you don’t have one of those m.website.com subdomains (it’s an entirely different website to search engines anyway), and test for the different form factors you expect your users to interact with your content on.

Reputation

As we’ve already covered, it’s important how and from whom you get traffic; focus on outreach channels that are from reputable domains. For instance, if you are building a tech solution for the construction industry, you want to be featured on a website that a majority of the industry body would visit, as opposed to a site that caters to a small subset niche early-adopters. Even better, think of a site that themselves have regular content being posted, like an industry news site, or a non-competition horizontal tech company that requires users to sign on every day.

To the same effect, don’t ever forego including social media into your SEO strategy. The impact that a well considered channel strategy of both the social machine and seo can have on your conversions is too good to pass by. This is especially true of time sensitive events. If something is going ‘viral’, a good indication that it’s important to your user socially is a good indication for the search tools.

That’s inbound, but outbound matters too. Link to other websites with both relevant content and quality content (cough, Neil Patel link above). You want to be known by Google and the user as a site that both is popular and useful to the user’s end-goals.

As far as your actual content goes, be relevant, but don’t be stale. If you’re a blog, this is easy – post engaging content regularly. If you’re a product site, keep your blog on your top level domain, and update your website product pages with updates, change logs, features, new case studies, customer success stories – it’s your job to drive this content for the sake of your users’ attention.

Lastly, for goodness sake, remember what your users are looking for.

Relevance

On that note, how are we supposed to know what people are looking for? Thankfully search engines leave clues for us to digest, and there are a bunch of awesome tools out there to point you in the right direction! Some free, others, not so much. Let’s have a quick think about how we can make sure we’re focusing on the right keywords.

Start off by working backwards, what pain point does my business address? And what does my website say now? Or more importantly, what should it say? You need to have a high correlation between your core offerings and the copy on your landing pages you’re hoping to rank well for. This way we get the right visitors who convert and the search engines see the value you’re providing, shooting you up the rankings, cha ching!

This might sound obvious, but if you don’t have crystal clear value propositions your user won’t stick around to unravel the mystery. Your user will be annoyed by it, and so will Google. Basically, if you’re the best executive recruiter in town you probably shouldn’t be writing content about resume tips for interns.

As a first step, try to put together a list of of the main ways your business helps people and what these people might be searching for. If your business is a Physiotherapy you might alleviate back pain regularly, you might also deal with shoulder and knee pain as your most regular visits. You know you’re great at helping with back pain, even more specifically lower back pain.

A starting point for your content strategy!

Summary

It is a search engine’s job to both crawl and index content, and to provide users with a ranked list based on their search queries. It is your job to prepare and distribute your content in a way that search engines will rank you well and that your users get what they were looking for. That is, in essence, SEO.

Don’t worry if some of this was a bit foreign. This topic is important to master as a digital marketer and we will give you the right stepping stone to get there.